Transform your company with custom AI

Soren helps institutions identify, deploy, and operate AI safely in high-impact workflows.

Book a demo

Supported by a team from

Who we serve

We partner with institutions at every stage. From early exploration to full-transformation.

-

Early AI Opportunity Assessment

A one-week engagement to identify where AI can save time, reduce operational overhead, and create the greatest impact.

-

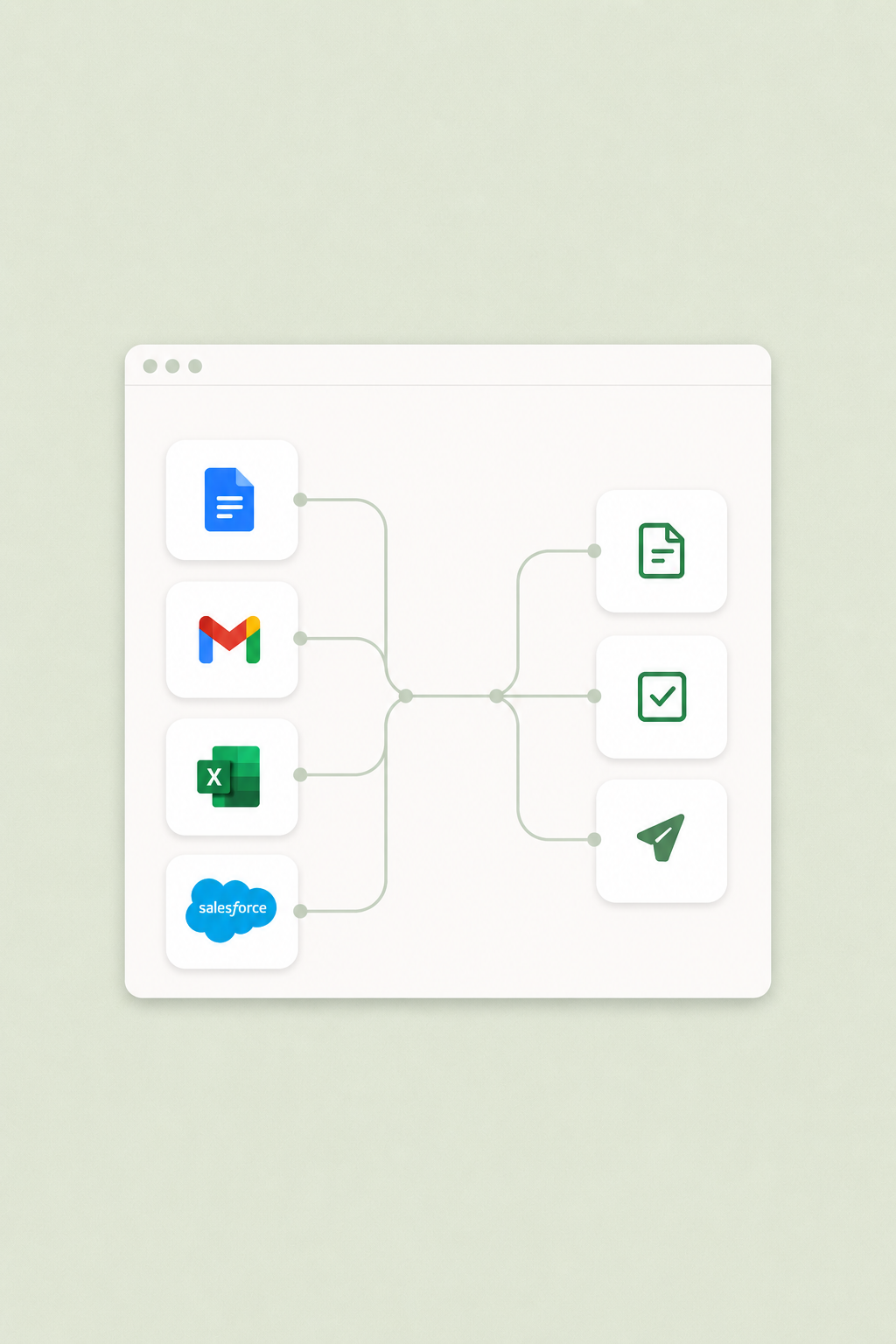

Growth Custom AI Workflows

Soren designs and deploys AI workflows tailored to your team's processes, delivering measurable ROI and a competitive advantage for your team.

-

Security Private AI Deployment

For teams with the highest security requirements. We deploy a private LLM within your environment so that sensitive data never leaves your company.

Partnering with

the best

How we work

Better results, faster. Our AI-native approach.

Industries

Transforming industries the world depends on.

What drives us

AI has transformed what humans can build, understand, and automate.

But in many of the world’s most important sectors, the impact has remained limited.

These industries rely on highly sensitive data and systems built upon decades of domain expertise. General-purpose AI, for all its power, still struggles to bridge that gap safely and reliably.

We started Soren with a simple belief: AI must be safe to be trusted, and specific to be truly useful.

Our vision is to bring deeply specialized intelligence to the world’s most critical industries.

FAQ

Frequently asked

How is Soren different from tools like Microsoft Copilot or ChatGPT?

Off-the-shelf assistants are built for everyone, so they aren't built for how your team actually works. Soren builds custom AI systems around your specific workflows, data, and standards, and runs them inside infrastructure you control — a real competitive edge rather than a generic chatbot.

We're not sure where AI fits yet. Where do we start?

Most teams start with an AI Readiness Assessment: a one-week engagement where our engineers evaluate your automation opportunities, data posture, security posture, and culture. You come away with a clear roadmap and a ranked list of high-ROI workflows.

What does a private AI deployment actually mean?

Your models, data, and infrastructure stay in environments you control — your cloud tenant, your VPC, or fully on-premise. Nothing leaves your perimeter, and no third party trains on your data. You also get flat-rate pricing instead of unpredictable per-token billing.

How do you handle sensitive or regulated data?

We design against the regulations you already operate under, including HIPAA, SOC 2, GLBA, and ISO 27001, plus any client-specific requirements. Every engagement begins with a data map, and access is scoped, logged, and auditable end to end.

Which industries and teams do you work with?

We focus on regulated and mission-critical institutions, with deep experience in legal, finance, healthcare, and government — teams where trust, compliance, and operational fit matter as much as raw model capability.

Do we need an in-house AI or engineering team to work with you?

No, and most of our clients don't have one. Our engineers handle design, deployment, and ongoing operation alongside your team, then hand off documentation and training at whatever level of involvement you prefer.